FOR IMMEDIATE RELEASE

On the Level with Hurricane Matthew

Data assimilation using DesignSafe-CI portal improves coastal water level models of storm surge

Flooding from hurricane storm surge can devastate lives and property. A new method yields substantially smaller errors in water level estimates from computer simulations. The work won a DesignSafe Dataset Award 2021. Photo of Kinston, North Carolina, on October 14, 2016. Credit: FEMA News Photos

AUSTIN, Texas, May 25, 2021 Hurricane storm surge is one of the most hazardous and difficult parts of a hurricane to forecast. Researchers at the University of North Carolina, Chapel Hill (UNC) have developed a data assimilation method for improving multi-day forecast of coastal water levels.

Data assimilation combines real-time measurements with model simulations. The method UNC researchers developed yielded substantially smaller errors in the water level estimates. Data and simulations from their case study of Hurricane Matthew are publicly available online through the DesignSafe cyberinfrastructure.

Lead author Taylor Asher, Department of Marine Sciences at UNC, was awarded a DesignSafe Dataset Award 2021, which recognized the datasets diverse contributions to natural hazards research.

Surge can devastate life and property. High winds of more than 70 miles per hour can spray walls of breaking water over 20 feet high and more than a mile inland. Surge causes 49 percent of the hurricane deaths in the U.S (1963 to 2012), and it damages on average 10 billion dollars of property every year (19002005). Hurricane Matthew was the most powerful storm of the 2016 season, killing 28 people from flooding and causing 10.3 billion dollars of damage.

Ashers team explored the physical components influencing water levels from storm surge that are not represented in even the best simulation codes, such as the widely-used Advanced Circulation model (ADCIRC). According to Asher, the physics in the model is too complicated and too costly to account for everything, especially under forecast settings where simulations must be completed quickly to be useful. Errors in simulating water level can be dominated by multi-day processes that are not included in the model, such as baroclinic processes, major oceanic currents, precipitation, steric fluctuations, and far-field atmospheric forcing.

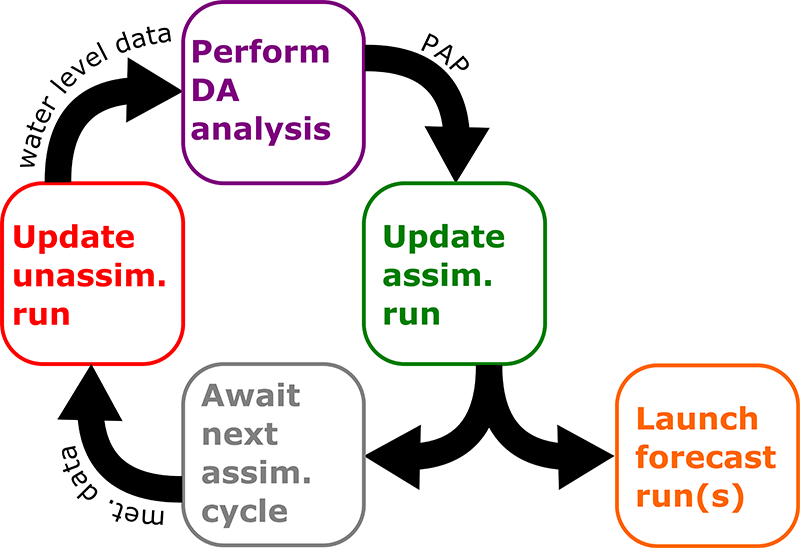

The data assimilation system can be broken down into four steps, (1) performing an unassimilated simulation, (2) time-averaging or low- pass filtering the difference between this simulation and observed water levels at observation sites, (3) generating a spatial difference field from these differences, (4) repeating the simulation with the added correction applied in the model. Credit: DOI: 10.1016/j.ocemod.2019.101483.

When most people think of a hurricane, its intense, windy core comes to mind. But on its perimeter, there are also atmospheric pressure changes and winds going in different directions. Those far-field effects far away from the storm can have a sizable influence on the water levels that determine how bad flooding can get.

One of the bigger realizations we made is of how strong these far-field wind effects could be on the total water level signal, Asher said. We found a fairly low-cost data assimilation method was able to have a really big improvement on the simulated water levels and really improve the quality and accuracy of the simulations.

Asher described the assimilation technique they used as optimal interpolation, which allowed them to use the time-averaged difference between observed and simulated water levels to create a water level error surface that confines the error and filters out high frequency fluctuations such as astronomical tides. They then applied that correction back into the model as a forcing term to basically push water in the simulation to where it should be and away from where it shouldnt.

They used the ADCIRC model coupled with the SWAN wave model.

We focused on the lower frequency component of the water level errors, Asher said. These are water level changes that occur over the course of usually a few days, since the model is good at capturing everything that happens daily, or even more often.

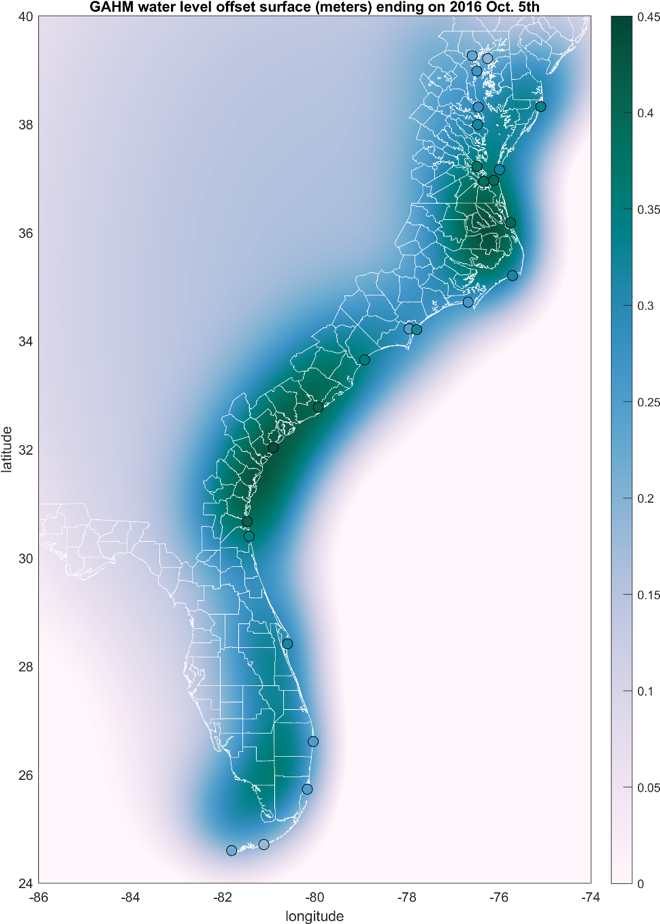

Ashers team used three different sources of meteorological forcing of surface wind and sea level pressures: Generalized Asymmetric Holland Model (GAHM); a combination of GAHM with North American Mesoscale Forecast System (NAM) fields; and a reanalysis by OceanWeather Inc. that includes stepped frequency microwave radiometer (SFMR) data.

Water level anomaly around Hurricane Matthew based on Ashers study data. Numeric labels indicate the difference (in meters) between the OI water level anomaly surface and the simulated mean water level at the site; white lines are coastline and county boundaries. Credit: Taylor Asher, UNC.

The DesignSafe interface is easy to use and its also easy to SSH. It gives you a command line so you can type to move things around, instead of just a point-and-click interface. Having the ability to do both was really advantageous, Asher said.

Ultimately, improved water level forecasts help people on the ground respond to hurricane storm surge. It can be critical to not only know how high water will get, but how quickly it will rise.

Knowing the timing of the water level coming in is critical for determining when to close floodgates, Asher said. The advances that weve made are going to have a big improvement on timing estimation.

The National Oceanic and Atmospheric Administration (NOAA) now uses the data assimilation technique developed by Asher and colleagues in its main water level forecasting model, called the Extratropical Surge and Tide Operational Forecast System (ESTOFS).

Said Asher: Like a lot of things in science, the goal is to produce something as detailed, accurate, and simple as you can. There are a lot of complexities and challenges in the science. Providing a system that allows comprehensive publication of the simulations (inputs and outputs), observations and analysis data, and all source code makes DesignSafe an invaluable tool. It means that I was able to transfer and publish our work in a way that means anyone could access the data and easily run the code with new data applicable for their project. This sort of reproducibility and transparency facilitates great science, and means that the nuance and complexity neednt be reduced for the sake of publication.

DesignSafe is a comprehensive cyberinfrastructure that is part of the NSF-funded Natural Hazard Engineering Research Infrastructure (NHERI) and provides cloud-based tools to manage, analyze, understand, and publish critical data for research to understand the impacts of natural hazards. The capabilities within the DesignSafe infrastructure are available at no-cost to all researchers working in natural hazards. The cyberinfrastructure and software development team is located at the Texas Advanced Computing Center (TACC) at The University of Texas at Austin, with a team of natural hazards researchers from the University of Texas, the Florida Institute of Technology, and Rice University comprising the senior management team.

NHERI is supported by multiple grants from the National Science Foundation, including the DesignSafe Cyberinfrastructure, Award #1520817.